The laws of physics are manifested everywhere in nature. Consider a flowing river: it obeys physical laws and, in effect, solves complex fluid dynamics equations as it flows from one point to another. The laws of physics are always at work, but are not being harnessed in a programmable way. A river, of course, is not considered to be a computer.

A flowing river is governed by complex fluid dynamics.

Flowing rivers and all other natural phenomena constantly obey the laws of physics, but only some types of physics are being harnessed in computation. Consider the following examples.

“Computing comes down to different ways of processing information. The difference between digital and quantum computing is in manipulating bits versus qubits.”

Three Ways Physics is Harnessed in Computation

1. Human Computation

Humans are programmable: our brains operate by harnessing biophysical processes to receive instructions, process information, and output results. The human brain is considered to be the most complex object in the universe, containing hundreds of billions of neurons, and trillions of synapses connecting the neurons.

One could say the human brain is by far the best computer out there, even capable of creating other kinds of computers. In fact, the concept of a computer was first used to describe humans who performed calculations by hand.

Humans performing calculations is a type of computation.

A famous example of human computers involves a team of women at NASA. They made important calculations, including how many rockets are required to make a plane airborne, the thrust-to-weight ratio for various aircraft, and trajectory computations for rocket launches. These calculations often filled up several notebooks with data and formulas. These human computers made important contributions to aeronautics and the space program.1

2. Digital Computation

The most well-known example of a computer, digital computers harness the physics of electromagnetism where they receive instructions as electrical signals. The discrete on (1) or off (0) signals are represented by binary digits—also known as bits. Sequences of bits are processed and results can be stored or returned. Whatever instructions are input or programming language is used, the result is the same—it is just bits to the computer.

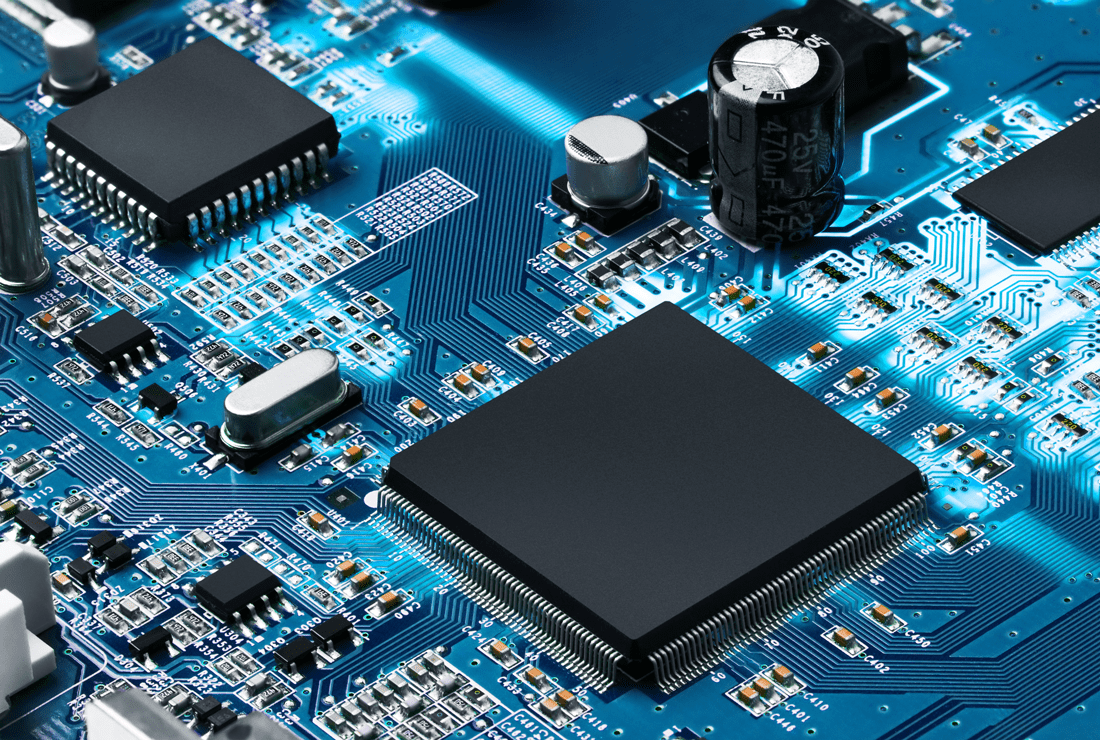

Digital computers are built using transistors which are semiconductor devices that amplify and switch electronic signals and are used to represent bits. If the voltage running through a transistor is high enough, it is interpreted as being ‘on’, otherwise it is considered ‘off’.

An integrated circuit with transistors and other electronic components.

Transistors and other electronic components are assembled on tiny silicon chips known as integrated circuits, which revolutionized the world of electronics. Integrated circuit technology has developed tremendously in a relatively short amount of time: An observation known as Moore’s Law states that every two years, the number of transistors packed into an integrated circuit roughly doubles, and the production cost is cut in half.

Laptops, smartphones, and many other digital applications are now woven into the fabric of modern society. This was made possible by the increasingly smaller size and lower cost of integrated circuits and subsequent advancement of digital technology.

3. Quantum Computation

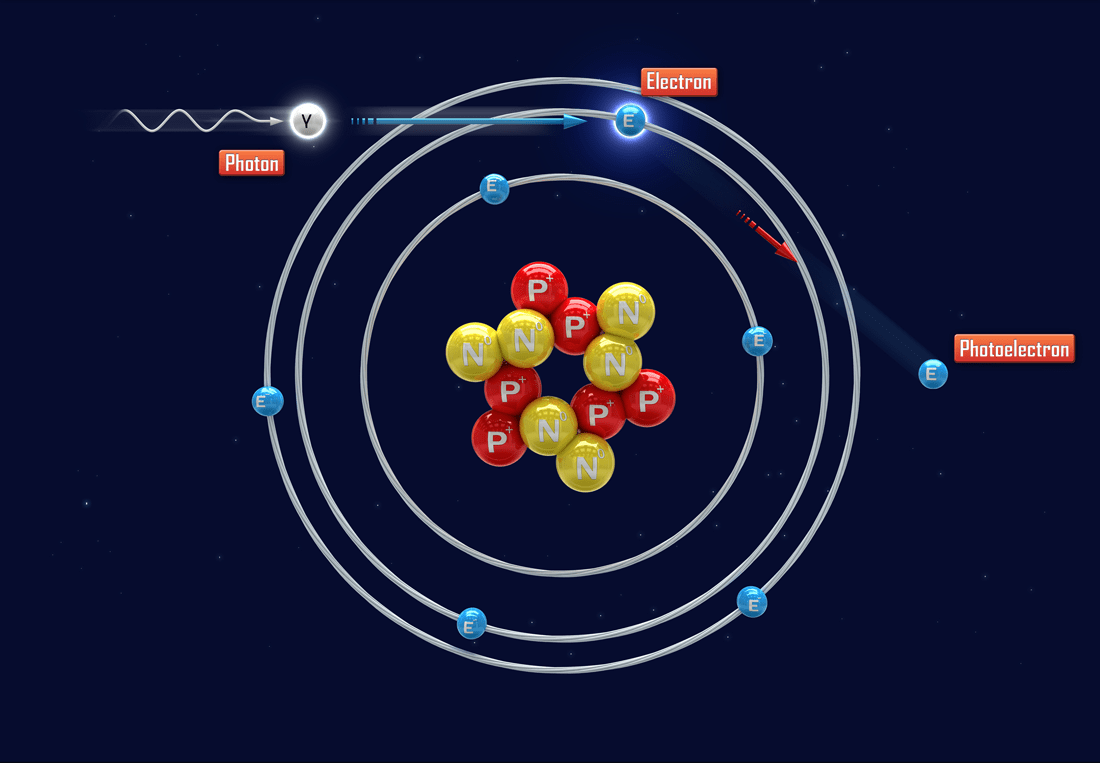

Quantum mechanics started with the idea that the state of a physical system, such as an atom, is described by discrete levels known as quanta. Light itself was shown to be made up of discrete packets known as photons, where the energy of a photon is proportional to its frequency. These principles were first verified in the photoelectric effect, described in detail by Albert Einstein in 1905.

The photoelectric effect: a photon interacts with an electron that is in a discrete orbit around the nucleus of an atom. The electron will be freed from the atom only if the photon has high enough energy (equivalently, frequency).

The idea of quantization started a revolution in physics that has been the subject of intense research for over a century and has resulted in many applications including the laser, magnetic resonance imaging, electron microscopy, and materials innovation by means of quantum simulation.

In 1982, physicist Richard Feynman proposed the idea of harnessing quantum systems themselves as a powerful way of performing quantum simulation. This proposal is widely viewed as the inception of quantum computing.

Quantum computing involves information processing by harnessing tiny quantum systems, called quantum bits—or, qubits. Qubits exhibit behaviour that seems exotic or weird on our macroscopic scale: quantum superposition, entanglement, and the unavoidable fact that observable quantities in quantum mechanics are based on probabilities. These features are all contained in the equations of motion in quantum mechanics, the most famous example being the Schrödinger equation.

In creating quantum algorithms based on qubits, promising applications arose including quantum cryptography and improved optimization methods. These applications, together with simulating quantum systems, are the major drivers behind the quantum computing movement.

Computing comes down to harnessing physics in different ways in order to process information. For example, the difference between digital and quantum computing is in manipulating bits versus qubits.

Subscribe to the 1QBit Blog to keep up with upcoming articles that will dig deeper into the concepts of quantum computing.